User Intent Optimization: What It Is and Why It Matters in AEO (AI SEO and AI Brand Visibility)

User intent denotes the motivation behind a search query. Identifying it lets organizations align content and marketing with audience needs, improving relevance and business outcomes. This article explains user intent optimization for AI-driven brand visibility, how search systems infer intent, and the commercial benefits of intent-focused services.

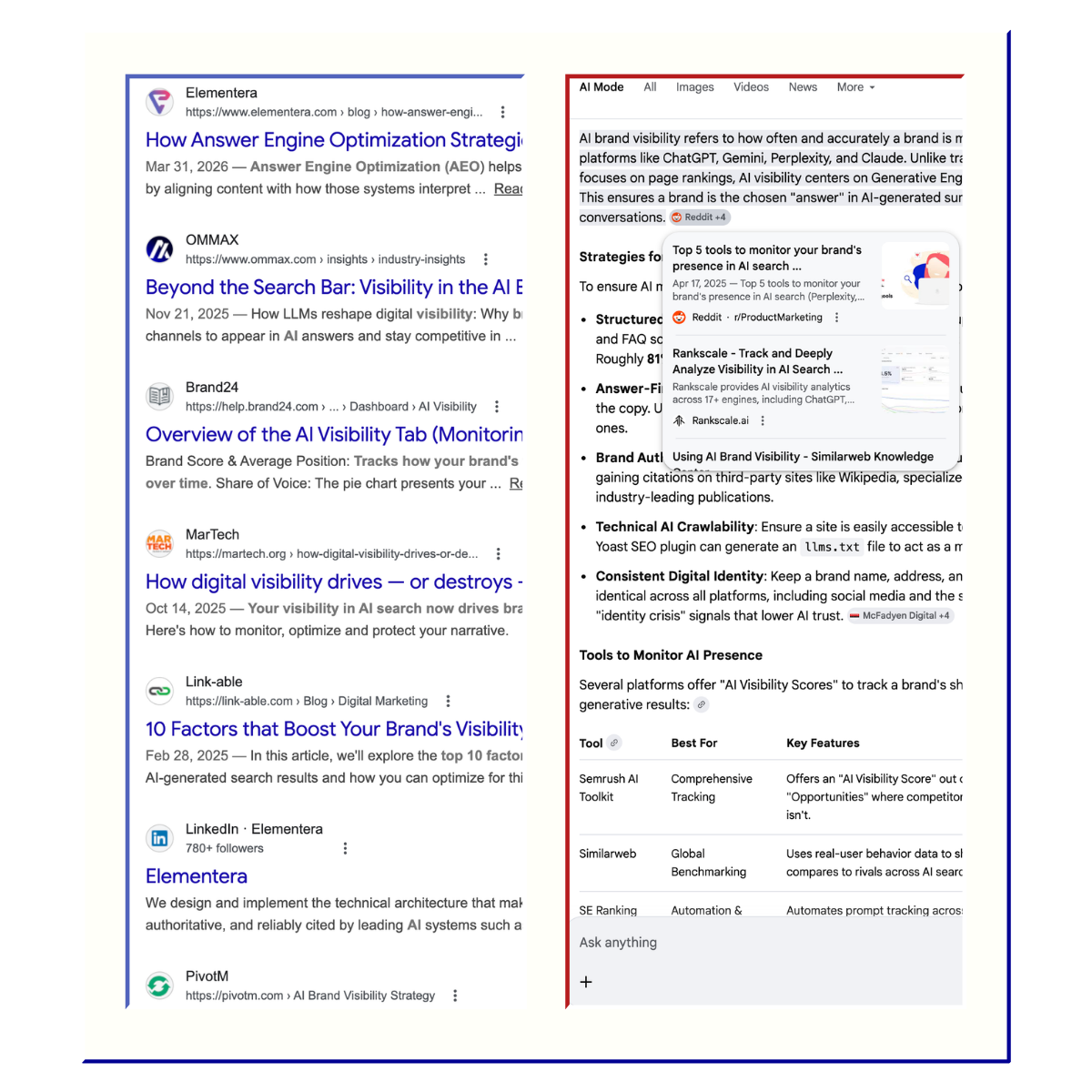

Search is no longer just a list of links. Increasingly, people get a short, AI-written answer first, along with a handful of links that support it. Google's AI Overviews deliver an AI-generated snapshot with key information and links to dig deeper. ChatGPT's search feature returns timely answers with inline citations. Perplexity numbers every source so users can verify. Claude's web search provides direct citations for fact-checking. And Gemini shows source links when it draws heavily from a page.

This shift changes the visibility game entirely. If the "main screen" is an answer, the question for marketers becomes: Will the AI use my content to build that answer, and will it cite or mention my brand while doing so?

That is what user intent optimization addresses. Not tricks or shortcuts, but a disciplined approach to building content that AI systems can confidently use as evidence for the answers people are asking for.

Key Takeaways

- User intent is the "why" behind a query, not the keywords. AI systems select content based on how well it satisfies the real goal a person is trying to accomplish.

- AI answer engines do not just rank pages. They use your content to build answers and decide whether to cite your brand while doing it.

- Modern AI search relies on semantic matching, passage-level selection, and query fan-out, which means content must address the full depth of an intent, not just the surface-level topic.

- Citations in AI answers are a new form of brand visibility. Being selected as a source creates an impression and a trust signal even when users never click through.

- Answer Engine Optimization (AEO) is not a separate discipline from SEO. It is the same fundamentals held to a higher standard: intent alignment, evidence clarity, and genuinely helpful content.

- Generic, keyword-stuffed content fails in AI search. Brands get cited when they provide something the AI does not already have: original research, specific expertise, or the clearest explanation available.

- Structure is not formatting. It is intent signalling. Content organized around the sub-questions a user actually has is easier for AI to extract, cite, and trust.

What User Intent Actually Means

User intent is the "why" behind a query. A query is what someone types. Intent is what they are trying to get done.

For example, when someone searches "best payroll software," the query is three words. But the intent behind it might be: "Help me choose a payroll tool that fits my company, budget, and country so I can buy with confidence."

This is not just a theoretical distinction. Google's Search Quality Evaluator Guidelines explicitly frame quality around whether results meet the user's intent for a given query. The "Needs Met" rating is built on this idea: the same short query can carry multiple plausible intents, and evaluators are trained to think about what most users likely want.

Two things matter here for anyone creating business content. First, intent is almost always more specific than the keywords suggest. A search for "AI visibility" could mean a diagnostic of how a brand appears in AI SEO like ChatGPT, a technical AEO gap analysis, or a full strategy for improving inclusion in AI-generated answers. Second, intent includes context beyond the topic itself. Google describes using signals like location, past search history, and settings to determine what is most relevant in the moment.

Why Intent Matters More in AEO (GEO, AI SEO, and AI Search) Than in Classic SEO

Classic SEO was often treated as "match the keywords." Modern search is much closer to "match the meaning," and AI answer engines push that bar even further.

Google's own ranking systems already focus on concepts and sections rather than exact words. RankBrain helps Google understand how words relate to concepts, returning relevant content even when it does not contain the exact words used. Neural matching works with "fuzzier representations of concepts" rather than relying solely on keywords. And passage ranking identifies individual sections of a page to assess relevance at the paragraph level.

AI answer experiences raise the bar further because they are trying to solve the full task, not just route you to a page. Google's AI Overviews and AI Mode may use a "query fan-out" technique, issuing multiple related searches across subtopics to develop a complete response. In other words, AI systems often break a user's intent into sub-intents, look for supporting evidence, and then assemble an answer from the best available sources.

Content that only "kind of relates" to the topic, or only answers one narrow slice of the task, is less likely to be selected when the system is explicitly trying to satisfy the whole intent.

From Keywords to Meaning: How AI Engines Interpret Intent

Understanding how AI engines actually process queries helps explain why intent optimization works. While every platform behaves differently, most modern AI search experiences follow a similar pipeline: interpret the request, retrieve candidate sources, rank evidence, generate an answer, and attach citations.

Natural Language Processing and Tokenization

The first step in understanding intent is Natural Language Processing (NLP). This is the technology that allows computers to understand human speech and text. When a query is submitted, the AI performs tokenization. This involves breaking the sentence into individual words or "tokens".

The system then looks at the grammatical role of each word. It identifies nouns, verbs, and adjectives to understand the structure of the request. However, it also performs more advanced tasks like stemming and lemmatization. Stemming involves chopping off the ends of words to find their base form. For instance, "searching," "searched," and "searches" are all reduced to "search." This helps the engine understand that different forms of a word often represent the same underlying intent.

They translate natural language into meaning

People write longer, more conversational queries now. Google explained that applying BERT helped it better understand queries where small words like "to" or "for" change the meaning entirely. The system is trying to understand relationships between words and the real-world situation, not just match a phrase.

Underneath this, AI engines use semantic search to bridge the gap between how humans speak and how computers process data. Where lexical search looks for literal word matches, semantic search focuses on the meaning behind the words and the relationships between concepts. A page that clearly states who something is for, when it applies, and what "good" looks like helps the model lock onto the right interpretation.

They use context from the session and user signals

Conversational engines consider follow-ups. OpenAI describes how ChatGPT search lets users go deeper with follow-up questions while considering the full context of the chat. Perplexity describes a similar contextual memory feature, remembering earlier context for follow-up questions. Traditional search also uses context; Google factors in location, search history, and settings.

For content creators, this means intent is often a moving target inside a conversation. Someone might start "informational," then quickly shift to "comparison," then "transactional." Your content needs to support the whole arc, not just the first question.

They retrieve and rank evidence using semantic similarity

Many AI answer systems rely on retrieval-augmented generation (RAG), an approach that combines a language model with a retriever over external content. A common building block for this retrieval is embeddings: numerical representations that capture concepts and semantic relationships. Items that are numerically similar tend to be semantically similar, which is why embeddings power search and clustering.

This matters because the system is not only matching exact keywords. It is matching meaning. Pages that use clear language, define terms, and include common synonyms make it easier for semantic retrieval to find them for the right intent.

They select passages, not entire pages

Google explicitly describes passage ranking as identifying individual sections of a web page to assess relevance. In AI answer contexts, this is even more important: the system might only need one definition, one step list, or one comparison paragraph. If your key content is buried or mixed with unrelated information, you are making it harder to extract the exact passage that satisfies the user's need.

This is why content modularization matters. Each section should be able to stand on its own as a complete answer to a sub-question, so the AI can pull the right piece without needing to parse the whole page.

They organize information around entities, not just keywords

In AEO, an entity is a distinct, well-defined thing or concept: a person, a place, a brand, or an abstract idea. AI systems organize these entities into knowledge graphs, which map how different things relate to each other. When a brand is a recognized entity, AI assistants can distinguish it from generic words. Without proper entity signals, a company named "AI Monitor" might be confused with tools for monitoring AI systems in general.

Building entity recognition requires clear, consistent branding across your content, structured data markup, and being referenced by authoritative third-party sources.

They show citations, which becomes a visibility channel

Several major AI experiences explicitly present sources. ChatGPT search includes inline citations and a Sources panel. Claude web search includes cited sources drawn from search results. Perplexity includes numbered citations linking to original sources. Google AI Overviews provide links to learn more.

When citations are part of the interface, being selected as a source is not just about traffic. It is brand visibility at the moment a user is making sense of a problem and deciding what to do next.

A Modern Taxonomy of User Intent

The classic buckets of informational, navigational, and transactional intent are still useful, but they are too simple for AI-era queries that often blend multiple goals. Google's own quality guidance shows that intent can include browsing, researching, comparing, and location-based needs. Here is a more practical map for AEO, GEO, and AI SEO:

- Informational: "Help me understand." Example: "What is SOC 2?" Even here, AI systems prefer sources that define clearly and add necessary context rather than vague overviews.

- Navigational: "Take me to a specific place." Example: "Notion pricing" or "CRA payroll deductions table." Google's guidelines treat this as "clear website intent" and stress honoring it when it is obvious. Modern navigational intent also includes finding specific functions within a digital product, such as a support center or account portal.

- Transactional: "Help me buy, sign up, or contact." Example: "Book a brand visibility audit Vancouver." The quality guidelines explicitly discuss product and purchase-related intents, including research that leads to a purchase.

- Investigative or Comparison: "Help me decide between options." Example: "Rippling vs BambooHR for US payroll." Google positions AI Mode as especially useful for complex comparisons that might have previously required multiple searches. AI engines look for structured data like tables, feature lists, and pros-and-cons to build these comparisons.

- Problem-solving or Task-based: "Help me do the thing." Example: "How do I migrate from HubSpot to Salesforce without losing attribution?" This is where query fan-out is most visible. One question can require multiple sub-searches to satisfy the full task. Brands that provide clear, step-by-step solutions are often cited as the authoritative source.

- Conversational and Iterative: "I am exploring through dialogue." In this category, the user's goal is not fixed at the start. They might begin with "I want to eat healthier" and gradually refine through follow-ups. This iterative process requires content that is modular and covers the journey from the broad "why" to the specific "how."

In practice, real queries blend these categories. A user might start with "What is SOC 2?" (informational), then ask "SOC 2 vs ISO 27001?" (comparison), then "How long does SOC 2 take for a seed-stage SaaS?" (task and planning), and finally "SOC 2 auditor Canada cost" (transactional). Conversational systems are built for this behavior.

How Intent Optimization Drives AI Brand Visibility

When an AI engine produces an answer with sources, it is making a choice about whose content becomes the evidence. That choice influences which brands a user sees right beside the answer.

"AI brand visibility" is, at its core, evidence visibility. Your brand is visible if your page is a supporting link. Your brand is visible if your research, definitions, or data are cited. Your brand can be visible even if the user never clicks, because the citation itself is an impression and a trust signal.

Recent research suggests that as many as 64% of mobile searches do not result in a click to a website. Instead, users get what they need directly from the AI Overview or chatbot response. While this may seem like a loss of traffic, it is actually a shift in where brand interaction happens. Being cited by the AI is the new form of visibility. Over time, this brand lift leads to more direct searches and higher conversion rates when the user eventually does visit your site.

What makes AI engines choose one source over another

AI engines are selective about the sources they use. They do not just pick the most popular site; they look for the source that most accurately satisfies the detected intent. Several factors influence this:

- Directness. Does the content provide a clear answer early? AI models prefer passages they can easily extract without sifting through long introductions.

- Authority and Expertise (E-E-A-T). AI engines look for signals that the content was created by a real expert, including detailed author bios, links to professional profiles, and original research. Google's helpful content guidance frames ranking systems as prioritizing content made to benefit people rather than content made to manipulate rankings.

- Structure and Readability. Content broken into clear sections with descriptive headers is easier for AI to parse. If text is modular, the AI can pull specific passages to answer sub-questions.

- Contextual Relevance. The AI looks for a strong match between the user's specific constraints (like location or budget) and the source's specificity.

- Consensus and Reputation. AI models cross-reference information across multiple sources. Research from Discovered Labs and Yext shows that different engines weigh consensus differently, but being frequently mentioned in positive ways across third-party sites consistently helps.

How different AI engines choose what to cite

While the core principles are similar, each engine has its own retrieval preferences. ChatGPT tends to favor consensus across established authoritative sources and community-driven platforms. Google Gemini and AI Overviews are heavily grounded in the traditional Google search index, so brands with strong organic SEO foundations have an advantage there. Perplexity performs real-time web retrieval for every query, making it excellent for current information and favoring up-to-date, technically scannable content. Claude tends toward deep reading and structured, data-rich content.

A practical implication: there is no single "AI SEO" playbook. But the common thread across all these engines is that content which clearly satisfies user intent, backed by real expertise, gets selected more often.

Measuring AI brand visibility

Traditional metrics like keyword rankings are becoming less complete. Marketers and founders should also track "citation share": how often their brand is mentioned or cited by different AI engines for high-intent queries. If an AI engine is asked for the "best project management software for agencies" and lists five brands, those brands are the ones winning the visibility game. Tracking these mentions across platforms provides a clearer picture of your brand's standing in the AI ecosystem.

A Practical Guide to User Intent Optimization

This section is for marketers, founders, and operators who need a repeatable workflow, not theory.

Start with the intent, then decide the answer shape

For any target query cluster, write down: the job the user is hiring the AI for (decide, learn, compare, do, or go somewhere); the likely follow-up questions in the same conversation; and the expected output format, whether that is a definition, checklist, comparison table, template, or "what should I do next."

Then build the page to deliver that answer shape. If the user wants a decision, do not publish a glossary entry. If the user wants steps, do not publish a thought leadership essay.

Put the direct answer first, then expand

A simple rule that works across engines: put the direct answer in the first screen, then expand. This supports passage selection systems and AI features that assemble responses from multiple sources. Every page should open with a 40 to 80-word summary that directly answers the primary question, followed by a deeper explanation, supporting evidence, and related questions.

For example, instead of three paragraphs of background before defining SOC 2, lead with: "SOC 2 is a third-party audit of how you protect customer data. If you sell B2B SaaS, you often pursue it to pass security reviews. Most teams choose Type I for speed, then Type II for proof over time." Then add the details. This is not dumbing down. It is serving the intent, fast.

Cover the full intent, not just the top layer

Because systems may use query fan-out, a single query can trigger multiple sub-searches. Your page should anticipate the sub-questions that complete the task. If the query is "best payroll software Canada," users often want compliance coverage, pricing models, integrations, setup time, and guidance on which company size each tool fits best. If you only list "top 10 tools," you match the topic but do not satisfy the decision intent.

Make your content easy to cite

In practice, "easy to cite" means: short, specific statements supported by context (define terms, name constraints, state assumptions, keep one idea per paragraph); clear section headings that match real questions (because BERT-era query understanding is tuned for natural language nuance); and evidence signals that build trust, such as original data, expert authorship, and references from other authoritative sources.

Include statistics and credible data where relevant. AI engines respond well to numbers and verifiable facts, which makes content more likely to be retrieved and cited. Write in a conversational, natural tone that mirrors how people speak, while being explicit and declarative rather than vague.

Use structured data to reinforce intent signals

Schema markup acts as a translation layer between your content and AI systems. While invisible to readers, it tells the AI exactly what it is looking at. For intent optimization, consider using Organization schema (to define your brand), FAQ schema (to mark specific questions and answers), HowTo schema (for step-by-step processes), Product and Offer schema (for transactional queries), and Author schema (to link content to verified expertise).

Common Mistakes That Quietly Kill Intent Alignment

- Targeting keywords instead of outcomes. If a page does not help the user finish their task, matching the phrase is not enough. Google explicitly frames relevance as more than keyword repetition. A page that mentions a keyword fifty times but fails to answer the user's secondary questions will be outperformed by a page that uses the keyword once but provides a comprehensive solution.

- Mixing multiple incompatible intents on one page. A page that tries to be a definition, a product landing page, and a comparison chart often ends up serving none of them well. Passage ranking can help, but only if sections are clean and focused.

- Burying the lead. If the AI has to read 1,000 words of introductory text to find the answer, it will likely skip the page and find a source that is more direct.

- Ignoring context like location and recency. Google's documentation says context signals affect what counts as relevant. Outdated or inaccurate information is a quality problem.

- Writing for robots, not readers. Google's people-first content guidance explicitly warns against content created to manipulate rankings rather than help people. In an AI answer context, content that exists only to rank often lacks the concrete substance an answer engine needs.

- Ignoring entity signals. Some brands create great content but fail to link it to their identity. Without proper schema and clear branding, the AI might use the information but fail to cite the brand. The content becomes "public knowledge" instead of "brand authority."

What This Means for Content Quality

In the traditional SEO era, brands could sometimes succeed by producing high volumes of mediocre content targeting specific keywords. That strategy fails in the AI era. Generic content is easily summarized by the AI itself, meaning the user has no reason to visit the source. If an AI can answer a user's question using its own training data, it will not cite a source.

A brand only gets cited when it provides something the AI does not already have or when it provides the most authoritative, up-to-date version of that information. This means clarity and completeness are now more important than word count. A 500-word article with a unique expert perspective or proprietary data is more valuable than a 3,000-word article that simply summarizes what everyone else is saying.

To remain visible, brands must become primary sources of information. This can be achieved through:

- Original Research: Conducting surveys, analyzing industry trends, and publishing proprietary data.

- Technical Technical Specs: Providing deep, granular details about products or services that generic AI models might lack.

- Case Studies: Sharing real world results with specific numbers and outcomes. These demonstrate expertise in a way that AI cannot easily replicate.

These demonstrate expertise in ways that AI cannot easily replicate, and they give the AI a reason to cite you rather than generate its own version of the same information.

Conclusion: Intent as the Final Frontier

Answer Engine Optimization (AEO) is the practice of shaping content so that answer-first systems can use it confidently. It is not a replacement for traditional SEO; it is an evolution of it.

Google's guidance for AI features in Search is clear: the best practices for SEO remain relevant, and there are no special extra requirements to appear in AI Overviews or AI Mode. Pages still need to be indexable and eligible to show in Search with a snippet. There is no separate "AI-only index."

What changes is the standard for "helpful." The system is not just ranking your page; it is trying to use it. AEO is the "last mile" of content strategy: while SEO ensures your website is crawlable and has good authority, AEO ensures the information on those pages is formatted in a way AI can actually work with.

The credible, non-salesy framing is this: AEO is not a trick. It is intent alignment plus evidence clarity. User intent is the foundation because it determines what "helpful" means, which determines what gets selected and cited. When you do user intent optimization well, you are not just optimizing content. You are building the best available evidence for the job the user is trying to complete.

And one unique aspect of AI visibility is that it compounds over time. When an AI model cites a brand as an authority, that citation can influence future retrieval. This creates a virtuous cycle where being a trusted source leads to more citations, which further strengthens the brand's entity recognition.

In answer-first search, that is often the difference between being visible and being ignored.